Wednesday, April 30, 2014

Tuesday, April 29, 2014

New pencil and paper encryption algorithm

Handycipher is a new pencil-and-paper symmetric encryption algorithm. I'd bet a gazillion dollars that it's not secure.Details of apple's fingerprint recognition

This is interesting:Touch ID takes a 88x88 500ppi scan of your finger and temporarily sends that data to a secure cache located near the RAM, after the data is vectorized and forwarded to the secure enclave located on the top left of the A7 near the M7 processor it is immediately discarded after processing. The fingerprint scanner uses subdermal ridge flows (inner layer of skin) to prevent loss of accuracy if you were to have micro cuts or debris on your finger.

With iOS 7.1.1 Apple now takes multiple scans of each position you place finger at setup instead of a single one and uses algorithms to predict potential errors that could arise in the future. Touch ID was supposed to gradually improve accuracy with every scan but the problem was if you didn't scan well on setup it would ruin your experience until you re-setup your finger. iOS 7.1.1 not only removes that problem and increases accuracy but also greatly reduces the calculations your iPhone 5S had to make while unlocking the device which means you should get a much faster unlock time.

Sunday, April 27, 2014

Ruby on Rails, Varnish and smartest CDN on the planet. That means microsecond response:

Ruby on Rails performance is a topic that has been widely discussed. Whichever the conclusion you want to make about all the resources out there, the chances you’ll be having to use a cache server in front of your application servers are pretty high. Varnish is a nice option when having to deal with this architecture: it has lots of options and flexibility, and its performance is really good, too.However, adding a cache server in front of your application can lead to problems when the page you are serving has user dependent content. Let’s see what can we do to solve this problem.

The thing about user dependent content is that, well, depends on the user visiting the site. In Rails applications, user authentication is usually done with cookies. This means that as soon as a user has a cookie for our application, the web browser will issue it along the request. Here comes a big problem: Varnish will not cache content when it’s requested with a cookie. This will kill your performance for logged in users, as Varnish will simply forward all those requests to the application.

A good approach to get a simple solution to this problem is to add the application cookie to the hash Varnish uses to look for cached content. This is done in the

vcl_hash function of the config file:

sub vcl_hash {

if (req.http.Cookie ~ "your_application_cookie") {

hash_data(req.url);

hash_data(req.http.Cookie);

return (hash);

}

}

|

The problem with the above solution is that we will be caching a lot of content that is not really user specific, as we cache entire requests, probably entire web pages. We can opt for a more fine grained solution that is not perfect but will probably give us a bit more of performance. We can issue our main pages without the content that depends on the user, leaving those contents for specific URL’s that can be loaded via AJAX after the main page has been loaded. If we use this solution, we can cache almost every single page with a single key (hash), and cache only the content that is really different for each user (for example, the typical menu on the top of the page with your username and other user related info).

To make this solution work, we have to tell Varnish to delete all cookies but the ones that are directed to the user related content URL’s. This has to be done both ways: from the client to the application and vice versa. To do so, we have to tweak

vcl_recv and vcl_fetch.

In

vcl_recv we tell Varnish what to do when it receives a

request from the client. So here we will delete the cookies from all the

requests but the ones that are needed so our Rails application knows

who we are:

sub vcl_recv

{

if (!(req.url ~ "/users/settings") &&

!(req.url ~ "/user_menu"))

{

unset req.http.cookie;

}

}

|

req.http.cookie.

With this we have half the solution baked. We also need to do the same thing when our application talks to Varnish: delete the cookies from the application response to the client except the ones that actually log the user in (if we didn’t do that we could never log in to the application because we could never receive the cookie from the application). This is done in the

vcl_fetch section:

sub vcl_fetch

{

if !(req.url ~ "^/login") && (req.request == "GET") {

unset beresp.http.set-cookie;

}

}

|

And that’s all we need to have a little more fine grained control on what content is cached depending on the user using Varnish as our cache server.

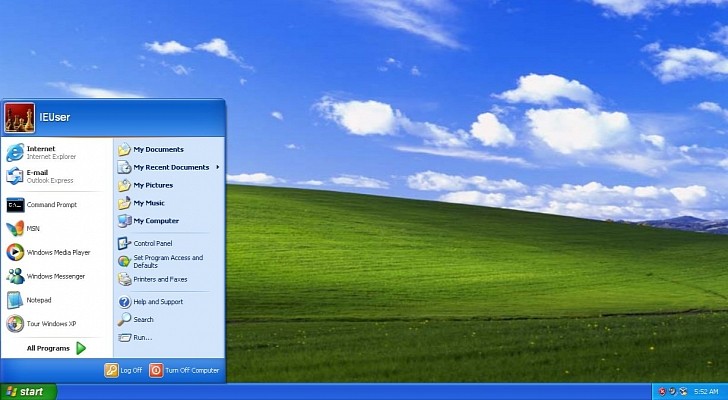

5 Important Things To Consider If You're Still Running Windows XP

Microsoft will no longer issue security updates, but many

people still need to keep Windows XP up and running safely. Here's how.

Avira and AVG are two capable and free tools that could help. Avast 2014 Free Anti-Virus is another free tool that gets good reviews, as is Kaspersky Internet Security 2014. In many cases the paid software will be more feature-rich than its free brethren. Keep in mind most of these tools themselves will quit supporting Windows XP by this time next year, so these are really only temporary fixes.

Microsoft has finally tied its good old dog, Windows XP,

to a tree ... and bashed its head in with a shovel. After 13 years of

loyal service, Microsoft has finally cut off support for Windows XP,

which means the company won't be issuing further security updates for

it. But plenty of people are going to keep using Windows XP anyway; it's

still operating in machines everywhere, from ATMs and point-of-sale

systems to computers at government agency and large corporations.

Fortunately, there are still several ways to stay

protected now that XP is vulnerable to new attacks and zero day bugs

that won't be patched by Microsoft.

The largest and laziest companies and governments agencies

are putting off the inevitable and paying Microsoft for additional

support. For instance, the U.S. Treasury Department is paying Microsoft

because it was not able to finish the migration to Windows 7 at the

Internal Revenue Service in time, and has reportedly paid millions for

new patches. The British and Dutch governments are both paying for XP

extended support as well.

These companies and governments have had years to consider

how to plan for the death of XP and now they have to pay the piper.

Extended support is not offered to smaller businesses and for those

whose personal machines are affected, only for large businesses that

strike a custom support agreement (CSA).

As an individual or small company, you are not going to be

able to get extended support from Microsoft. You probably do not want

it anyway. Your best bet? Buy some new computers.

If new hardware is not an immediate option, here are five

things you need to know about the end of Windows XP, plus one option to

consider.

What End Of Support Means

Microsoft has moved on to Windows 8 as the core of its OS

business, so it will no longer provide software updates to XP machines

from Windows Update. Technical assistance will no longer be provided by

Microsoft, and Microsoft Security Essentials downloads are not

available. Anyone who has Microsoft Security Essentials already

installed will continue to get anti-malware signature updates for a

limited time.

"Microsoft will continue to provide anti-malware

signatures and updates to the engine used within our anti-malware

products through July 14, 2015," a Microsoft spokesperson said in an

email.

The signatures are a set of characteristics used to identify malware. The engine leverages these signatures to decide if a file is malicious or not. The Malicious Software Removal Tool (MSRT) will also continue to be updated and deployed via Windows Update through July 14, 2015. Windows XP will not be supported on Forefront Client Security, Forefront Endpoint Protection, Microsoft Security Essentials, Windows Intune, or System Center after April 8, 2014, though anti-malware signature updates can still be delivered by these products through July 14, 2015.

Along with security, Windows Update also provided XP users

software updates, such as new drivers. That will not happen anymore, so

hardware may become less reliable over time.

Watch Out for Heartbleed

The dangerous Heartbleed bug does not effect Windows

machines (as far as is known at this point), but it does attack websites

that XP machines connect to. No security patches for Windows XP means

the Heartbleed vulnerability creates another layer of danger. Websites

that have not updated their OpenSSL certificates could be targets of

Heartbleed and it is possible that information from an unprotected

laptop could be exposed.

3rd Party Security Software That Supports Windows XP

A bit of good news is that Windows XP users are not completely helpless, because Microsoft will allow downloads of existing patches it has already released. Microsoft's Windows Update will still be the home for existing patches. Anti-virus software is readily available off the shelf and it's a good idea to grab one of those as well.Avira and AVG are two capable and free tools that could help. Avast 2014 Free Anti-Virus is another free tool that gets good reviews, as is Kaspersky Internet Security 2014. In many cases the paid software will be more feature-rich than its free brethren. Keep in mind most of these tools themselves will quit supporting Windows XP by this time next year, so these are really only temporary fixes.

What About Embedded Systems?

Windows Embedded devices, like scanners, ATMs and other commercial products, also run a version of Windows XP, but they have a different support cycle than the desktop version. Official Microsoft support for these products continues in many cases, for some up until April 9, 2019, according to Microsoft. Windows XP Professional for Embedded Systems is the same as Windows XP, and support for it is finished just like for most people on XP. Windows XP Embedded Service Pack 3 (SP3) and Windows Embedded for Point of Service SP3 will see extended support until Jan. 12, 2016.

Windows Embedded Standard 2009 will be supported

until Jan. 8, 2019, and Windows Embedded POSReady 2009 will live on

until April 9, 2019. These were released in 2008 and 2009, and that

explains why they are seeing much longer support. Note to Windows

Embedded product suppliers: don't let these end dates sneak up on you

like it seems to have for so many Windows XP desktop users. Plan ahead

and prosper.

Update To A New Windows Version

If you opt to update old hardware (as opposed to just

buying a new computer), the process is a bit more involved because it

entails manually updating all of the operating system for every computer

in the company. Nor is it free. It’s not free, but Microsoft does offer

a tutorial on upgrading Windows XP to Windows 8.1,

the most up to date Windows version. Windows 8.1 has hardware

requirements (generally, 1 GB of RAM and at least 16 GB of storage as

the lowest compatible devices) so some older machines simply won’t be

able to run Win 8.1.

Any time you update an operating system, it is a good idea

to back up all your data and files. Windows XP offers and emergency

backup function, but it is best to just save everything to an external

system or the cloud ahead of time. The emergency backup functions is the

Windows.old folder and it saves some files for 28 days, so those who didn’t back up or save their data can retrieve it.

Switch To Linux

Anyone ready to ditch Windows altogether who doesn’t want

to spend the money on a Mac can also opt for the open source Linux

operating system. It’s free, but does require more technical know how

with a steeper learning curve. All personal data and files will have to

be saved or backed up or they will be erased upon switching. Part of the

fun with Linux is there are 58 separate varieties, known as

distributions, on the Linux.com website.

That means there are lots of choices of different looks

and feels of operating systems. Some popular versions are Debian, Ubuntu

and Fedora. Look for distributions with good documentation and be sure

to check the hardware requirements for compatibility.

China Says Windows 8 Is Too Expensive, Plans to Replace Windows XP with Linux |

| |

|

Windows XP continues to be one of the most used

operating systems in China, but the local government is working to

replace the retired operating system with an alternative platform.

According to a report published by news agency Xinhua,

this alternative platform could be Linux, as the country is even

considering building its very own open-source operating system.

Windows XP is said to be installed on nearly 200 million computers in

China and while the most obvious choice would be an upgrade to Windows 8

or 8.1, local authorities claim that Microsoft's modern operating

system is too expensive. At the same time, Chinese officials said that

they have only recently purchased genuine copies of Windows XP, so

investing in another operating system is not an option right now.

“Windows 8 is fairly expensive and will increase government procurement

costs,” National Copyright Administration deputy director Yan Xiaohong

was quoted as saying by Xinhua.

This is how the idea of switching to Linux was actually born. While

Chinese authorities initially said that staying with Windows XP for a

while could be a solution with the help of local security vendors,

they're now looking into the open source world as well.

“Security problems could arise because of a lack of technical support

after Microsoft stopped providing services, making computers with XP

vulnerable to hackers. The government is conducting appraisal of related

security products and will promote use of such products to safeguard

users' information security,” Xiaohong continued.

Not much is known about the Chinese version of Linux, but Zhang Feng,

chief engineer of the Ministry of Industry and Information Technology,

claims that the ministry could grant the budget for the development of

such an operating system if everything goes as planned.

China is the only country that has specifically asked Microsoft to

extend Windows XP support for local computers, explaining that it has

invested millions of dollars to purchase legitimate copies of the

operating system, so it wouldn't make much sense to spend taxpayers'

money on new upgrades right now.

Microsoft, on the other hand, has refused to do so and explained that

just like all the other markets out there, China needs to upgrade its

computers to a newer OS version. Of course, Redmond was also open to

talks regarding custom support for Windows XP, but at the time of

writing this article, no agreement has been reached, so all local

computers could become vulnerable to attacks if someone finds an

unpatched vulnerability in the operating system.

|

Thursday, April 24, 2014

Is Google Too Big to Trust?

Interesting essay about how Google's lack of transparency is hurting their trust:The reality is that Google's business is and has always been about mining as much data as possible to be able to present information to users. After all, it can't display what it doesn't know. Google Search has always been an ad-supported service, so it needs a way to sell those users to advertisers -- that's how the industry works. Its Google Now voice-based service is simply a form of Google Search, so it too serves advertisers' needs.

In the digital world, advertisers want to know more than the 100,000 people who might be interested in buying a new car. They now want to know who those people are, so they can reach out to them with custom messages that are more likely to be effective. They may not know you personally, but they know your digital persona -- basically, you. Google needs to know about you to satisfy its advertisers' demands.

Once you understand that, you understand why Google does what it does. That's simply its business. Nothing is free, so if you won't pay cash, you'll have to pay with personal information. That business model has been around for decades; Google didn't invent that business model, but Google did figure out how to make it work globally, pervasively, appealingly, and nearly instantaneously.

I don't blame Google for doing that, but I blame it for being nontransparent. Putting unmarked sponsored ads in the "regular" search results section is misleading, because people have been trained by Google to see that section of the search results as neutral. They are in fact not. Once you know that, you never quite trust Google search results again. (Yes, Bing's results are similarly tainted. But Microsoft never promised to do no evil, and most people use Google.)

Conversnitch post snippets of your conversations automated

Surveillance is getting cheaper and easier:Two artists have revealed Conversnitch, a device they built for less than $100 that resembles a lightbulb or lamp and surreptitiously listens in on nearby conversations and posts snippets of transcribed audio to Twitter. Kyle McDonald and Brian House say they hope to raise questions about the nature of public and private spaces in an era when anything can be broadcast by ubiquitous, Internet-connected listening devices.This is meant as an art project to raise awareness, but the technology is getting cheaper all the time.

The surveillance gadget they unveiled Wednesday is constructed from little more than a Raspberry Pi miniature computer, a microphone, an LED and a plastic flower pot. It screws into and draws power from any standard bulb socket. Then it uploads captured audio via the nearest open Wi-Fi network to Amazon's Mechanical Turk crowdsourcing platform, which McDonald and House pay small fees to transcribe the audio and post lines of conversation to Conversnitch's Twitter account.Consumer spy devices are now affordable by the masses. For $54, you can buy a camera hidden in a smoke detector. For $80, you can buy one hidden in an alarm clock. There are many more options.

The security of various programming languages

Interesting research on the security of code written in different programming languages. We don't know whether the security is a result of inherent properties of the language, or the relative skill of the typical programmers of that language.The report.

Wednesday, April 23, 2014

NIST Removes Dual_EC_DRBG From Random Number Generator Recommendations

"National Institute of Standards and Technology (NIST) has removed the much-criticized Dual_EC_DRBG (Dual Elliptic Curve Deterministic Random Bit Generator) from its draft guidance on random number generators following a period of public comment

and review. The revised document retains three of the four previously

available options for generating pseudorandom bits required to create

secure cryptographic keys for encrypting data. NIST recommends that

people using Dual_EC_DRBG should transition to one of the other three recommended algorithms as quickly as possible."

Tuesday, April 22, 2014

Research Report Confirms Snowden’s Positive Effect on Industry

More than half of information security professionals believe the Snowden revelations have had a positive effect on the industry, according to a report released today. The research report, titled ‘Information security: From business barrier to business enabler’, surveyed 1,149 information security professionals across the globe about the industry landscape and the challenges they face.

The research report, commissioned by Infosecurity Europe, highlights the increasing importance of information security to business strategy – from the effect of Edward Snowden’s NSA leaks and the impact of big data, to the demand for boardroom education and the need to develop a long-term strategy to combat evolving threats.According to the report, information security is gradually being recognized as a business enabler.The results reveal that more effective collaboration between government and the information security industry is crucial to protecting organizations from future cyber threats, with 68% of the information security professionals surveyed believing that intelligence is not currently shared effectively between government and industry.

With only 5% of those surveyed selecting the government as their most trusted source for intelligence, it is apparent that more work needs to be done to strengthen government’s position as a source of information on potential threats.

“This is something that needs to be addressed urgently,” said Brian Honan, Founder & CEO, BH Consulting, a keynote speaker at Infosecurity Europe 2014. “Without better collaboration between industry and governments we are at a disadvantage against our adversaries. As threats and the capabilities of those looking to breach our systems evolve we need to jointly respond better in how we proactively deal with the threat.”

According to the Infosecurity Europe research report, data security is being pushed up the corporate agenda, likely catalyzed by the Snowden revelations. The NSA exposé has triggered action, with 58% believing the Snowden affair has been positive in making their business understand potential threats.

When asked whether the Snowden affair has increased the pressure applied by business to information security professionals to protect critical information, almost half (46%) of all respondents said that it has.

Data, Data & More Data

Thirty percent of information security professionals feel their organization isn’t able to make effective strategic decisions based on deluge of data they receive, and only 59% say they trust the data they receive. Considering the majority have witnessed this volume of data increase over the past 12 months, adopting a future-proof approach to information security is going to become increasingly important. A worrying 44% believe the industry has a short-termist approach to security strategy.“The way information security is perceived is changing, and events such as the Edward Snowden affair have taught both government and industry several valuable lessons”, said David Cass, Senior Vice President & Chief Information Security Officer at Elsevier, and speaker at Infosecurity Europe 2014. “Threats to security and privacy occur from outside and inside organizations. The complexity of today's threat landscape is beyond the capability of any one company or country to successfully counter on their own. Experience shows there’s clearly more work to be done until businesses understand the importance of information security to long-term strategy. This challenge, combined with the groundswell of data, supports the need for immediate change. Part of this change requires better sharing of information between government and industry."

More than Half of IT Workers Make Undocumented System Changes

Frequent IT system changes without documentation or audit processes can cause system downtime and security breaches from internal and external threats, while decreasing overall operational efficiency. Yet, a new survey has revealed that a majority of IT professionals have made undocumented changes to their IT systems that no one else knows about.

The survey

from Netwrix shows that while a full 57% have undertaken those

untracked changes, it’s especially worrying because of the frequency

with which they occur. About half (52%) of respondents said that they

make changes that impact system downtime daily or weekly. And 40% make

changes that impact security daily or weekly. Interestingly, more highly

regulated industries are making changes that impact security more

often, including healthcare (44%) and financial (46%).

“This data reveals that IT organizations are regularly making undocumented changes that impact system availability and security,” said Michael Fimin, CEO at Netwrix, in a statement. “This is a risky practice that may jeopardize the security and performance of their business. IT managers and CIOs need to evaluate the addition of change auditing to their change management processes. This will enable them to ensure that all changes – both documented and undocumented – are tracked so that answers can be quickly found in the event of a security breach or service outage.”

But even so, as many as 40% of organizations don’t have formal IT change management controls in place at all. And 62% said that they have little or no real ability to audit the changes they make, revealing serious gaps in meeting security best practice and compliance objectives.

Just 23% have an auditing process or change auditing solution in place to validate changes are being entered into a change management solution.

Given the prevalence of changes, this lack of change management is creating a dangerous environment for enterprises. The survey found that 65% have made changes that caused services to stop, and 39% have made a change that was the root cause of a security breach.

“With roughly 90% of outages being caused by failed changes, visibility into IT infrastructure changes is critical to maintaining a stable environment,” said David Monahan, research director for security and risk management at Enterprise Management Associates, in a statement. “Change auditing is also foundational to security and compliance requirements. Auditing changes in enterprise class environments requires the ability to get a high-level strategic view without sacrificing the tactical system level detail and insight extended throughout the whole system stack.”

“This data reveals that IT organizations are regularly making undocumented changes that impact system availability and security,” said Michael Fimin, CEO at Netwrix, in a statement. “This is a risky practice that may jeopardize the security and performance of their business. IT managers and CIOs need to evaluate the addition of change auditing to their change management processes. This will enable them to ensure that all changes – both documented and undocumented – are tracked so that answers can be quickly found in the event of a security breach or service outage.”

But even so, as many as 40% of organizations don’t have formal IT change management controls in place at all. And 62% said that they have little or no real ability to audit the changes they make, revealing serious gaps in meeting security best practice and compliance objectives.

Just 23% have an auditing process or change auditing solution in place to validate changes are being entered into a change management solution.

Given the prevalence of changes, this lack of change management is creating a dangerous environment for enterprises. The survey found that 65% have made changes that caused services to stop, and 39% have made a change that was the root cause of a security breach.

“With roughly 90% of outages being caused by failed changes, visibility into IT infrastructure changes is critical to maintaining a stable environment,” said David Monahan, research director for security and risk management at Enterprise Management Associates, in a statement. “Change auditing is also foundational to security and compliance requirements. Auditing changes in enterprise class environments requires the ability to get a high-level strategic view without sacrificing the tactical system level detail and insight extended throughout the whole system stack.”

Android malware repurposed to Thwart Two-factor Authentication

A malicious mobile application for Android that offers a range of espionage functions has now gone on sale in underground forums with a new trick: it’s being used by several banking trojans in an attempt to bypass the two-factor authentication method used by a range financial institutions.

Dubbed iBanking, the bot

offers a slew of phone-specific capabilities, including capturing

incoming and outgoing SMS messages, redirecting incoming voice calls and

capturing audio using the device’s microphone. But as reported by

independent researcher Kafeine,

it’s now also being used to thwart the mobile transaction authorization

number (mTAN), or mToken, authentication scheme used by several banks

throughout the world, along with Gmail, Facebook and Twitter.

Recently, RSA noted that iBanking’s source code was leaked on underground forums.

“In fact, the web admin panel source was leaked as well as a builder script able to change the required fields to adapt the mobile malware to another target,” said Jean-Ian Boutin, a researcher at ESET, in an analysis. “At this point, we knew it was only a matter of time before we started seeing some ‘creative’ uses of the iBanking application.”

To wit, it’s being used for a type of webinject that was “totally new” for ESET: it uses JavaScript, meant to be injected into Facebook web pages, which tries to lure the user into installing an Android application.

Once the user logs into his or her Facebook account, the malware tries to inject a fake Facebook verification page into the website, asking for the user’s mobile number. Once entered, the victim is then shown a page for SMS verification if it’s an Android phone being used.

The hackers are very helpful: “If the SMS somehow fails to reach the user’s phone, he can also browse directly to the URL on the image with his phone or scan the QR code,” explained Boutin. “There is also an installation guide available that explains how to install the application.”

Since the webinject is available through a well-known webinject coder, this Facebook iBanking app might be distributed by other banking trojans in the future, ESET warned. “In fact, it is quite possible that we will begin to see mobile components targeting other popular services on the web that also enforce two-factor authentication through the user’s mobile,” Boutin said.

Also, because Google has stepped up its game in filtering malicious apps from the Google Play store, some speculate that Android malware authors have had to resort to novel and convoluted methods for getting their malware installed on users’ devices.

“The iBanking/Webinject scheme uses what is becoming a standard technique: first it infects the user’s PC, then it uses this position to inject code into the user’s PC web browser on a trusted site, telling the user that the trusted site wants them to ‘sideload’ an Android app, ostensibly for security reasons,” said Jeff Davis, vice president of engineering at Quarri Technologies, in an emailed comment. “The attack even includes instructions on how to change their Android settings to allow sideloading, which should be a big red flag but apparently isn’t.”

Clearly, the PC is still the weak link in internet security, both for individuals and for enterprises, he added.

In any event, users should avoid installing apps on a mobile device using the PC. “Sideloading is a major vector for malware getting installed on Android devices,” he said. “Although Android provides a warning about sideloading making your device more vulnerable when you enable it, it seems that warning isn’t strong enough. Maybe they need bold, blinking red text saying, ‘Legitimate apps are rarely installed this way! You’re probably installing malware on your device!’”

Recently, RSA noted that iBanking’s source code was leaked on underground forums.

“In fact, the web admin panel source was leaked as well as a builder script able to change the required fields to adapt the mobile malware to another target,” said Jean-Ian Boutin, a researcher at ESET, in an analysis. “At this point, we knew it was only a matter of time before we started seeing some ‘creative’ uses of the iBanking application.”

To wit, it’s being used for a type of webinject that was “totally new” for ESET: it uses JavaScript, meant to be injected into Facebook web pages, which tries to lure the user into installing an Android application.

Once the user logs into his or her Facebook account, the malware tries to inject a fake Facebook verification page into the website, asking for the user’s mobile number. Once entered, the victim is then shown a page for SMS verification if it’s an Android phone being used.

The hackers are very helpful: “If the SMS somehow fails to reach the user’s phone, he can also browse directly to the URL on the image with his phone or scan the QR code,” explained Boutin. “There is also an installation guide available that explains how to install the application.”

Since the webinject is available through a well-known webinject coder, this Facebook iBanking app might be distributed by other banking trojans in the future, ESET warned. “In fact, it is quite possible that we will begin to see mobile components targeting other popular services on the web that also enforce two-factor authentication through the user’s mobile,” Boutin said.

Also, because Google has stepped up its game in filtering malicious apps from the Google Play store, some speculate that Android malware authors have had to resort to novel and convoluted methods for getting their malware installed on users’ devices.

“The iBanking/Webinject scheme uses what is becoming a standard technique: first it infects the user’s PC, then it uses this position to inject code into the user’s PC web browser on a trusted site, telling the user that the trusted site wants them to ‘sideload’ an Android app, ostensibly for security reasons,” said Jeff Davis, vice president of engineering at Quarri Technologies, in an emailed comment. “The attack even includes instructions on how to change their Android settings to allow sideloading, which should be a big red flag but apparently isn’t.”

Clearly, the PC is still the weak link in internet security, both for individuals and for enterprises, he added.

In any event, users should avoid installing apps on a mobile device using the PC. “Sideloading is a major vector for malware getting installed on Android devices,” he said. “Although Android provides a warning about sideloading making your device more vulnerable when you enable it, it seems that warning isn’t strong enough. Maybe they need bold, blinking red text saying, ‘Legitimate apps are rarely installed this way! You’re probably installing malware on your device!’”

Heartbleed Blows the Lid Off of Tor's Privacy

Of the many casualties of the Heartbleed flaw, the Tor anonymity browser is one of the more interesting. As a heavy user of SSL to encrypt traffic between the various Tor nodes, it’s no surprise the network announced that it was vulnerable – which potentially compromises its much-vaunted privacy function.

“Note that this bug

affects way more programs than just Tor – expect everybody who runs an

https webserver to be scrambling,” the Tor Project said in a blog.

“If you need strong anonymity or privacy on the internet, you might

want to stay away from the internet entirely for the next few days while

things settle.”

Independent investigator Collin Mulliner decided to further examine Heartbleed’s effect on Tor, and pulled a list of about 5,000 nodes to examine. Using a proof-of-concept exploit, he found 1,045 of the 5,000 nodes to be vulnerable, or about 20%. The vulnerable Tor exit nodes leak plain text user traffic, he said, meaning that hackers can lift host names, downloaded web content, session IDs and so on.

"The Heartbleed bug basically allows anyone to obtain traffic coming in and out of Tor exit nodes (given that the actual connection that is run over Tor is not encrypted itself),” Mulliner explained. “Of course a malicious party could run a Tor exit node and inspect all the traffic that passes through it, but this requires running a Tor node in the first place. Using the Heartbleed bug, anyone can query vulnerable exit nodes to obtain Tor exit traffic.”

To fix the issue, Tor is in the process of updating vulnerable nodes. In the meantime, it can create a blacklist of vulnerable Tor nodes and avoid them – something that it’s started to do. It’s a process that could lead to the network losing about 12% of its exit capacity, Tor noted.

The majority of the vulnerable Tor nodes are located in Germany, Russia, France, the Netherlands, the UK and Japan, Mulliner found.

Independent investigator Collin Mulliner decided to further examine Heartbleed’s effect on Tor, and pulled a list of about 5,000 nodes to examine. Using a proof-of-concept exploit, he found 1,045 of the 5,000 nodes to be vulnerable, or about 20%. The vulnerable Tor exit nodes leak plain text user traffic, he said, meaning that hackers can lift host names, downloaded web content, session IDs and so on.

"The Heartbleed bug basically allows anyone to obtain traffic coming in and out of Tor exit nodes (given that the actual connection that is run over Tor is not encrypted itself),” Mulliner explained. “Of course a malicious party could run a Tor exit node and inspect all the traffic that passes through it, but this requires running a Tor node in the first place. Using the Heartbleed bug, anyone can query vulnerable exit nodes to obtain Tor exit traffic.”

To fix the issue, Tor is in the process of updating vulnerable nodes. In the meantime, it can create a blacklist of vulnerable Tor nodes and avoid them – something that it’s started to do. It’s a process that could lead to the network losing about 12% of its exit capacity, Tor noted.

The majority of the vulnerable Tor nodes are located in Germany, Russia, France, the Netherlands, the UK and Japan, Mulliner found.

Comment: New Approaches Needed for Database Security and Advanced Network Threats

Today’s advanced threats require a smarter approach to database security that extends beyond detection and monitoring the usual attack surfaces and SQL injection techniques. This is the view from NetCitadel’s Duane Kuroda

As highlighted in the recent Target breach investigation, deeper threat context and push-button automatic response should be in the toolbox of information security professionals to reduce false positives, speed up prioritization, and enable immediate system lock-down to halt data theft and breeches. Network security technology has evolved to provide robust perimeter protection for today’s complex network environments. Unfortunately, traditional network security solutions have not kept pace, and perimeter security falls short when it comes to addressing the core of the network.Perimeter security’s limited visibility into the protocols used to access core network assets and a heavy reliance on signatures has hampered its ability to reliably detect attacks occurring against databases and servers in data centers. New attacks aren’t limited to the standard SQL injection techniques, but have expanded to include obfuscated and optimized SQL injections, bot redirections, privilege escalation, and abuse of form entry and API communications formats.

A new security approach is required – one designed to more actively understand the networks, sub-networks, and assets they are protecting. The ability to understand the protocols, ports, and users accessing data is also a requirement, as is the ability to quickly block them when there is a compromise. Detection systems need to go beyond simple pattern-based threat detection and be capable of accurately separating normal behavior from attacks. New approaches start with the attack surface and extend to automated tools to enforce protection. In between, tasks include monitoring the core network, protocol layers, and user behavior to aggregate and distill actionable threat information.

When data breaches hit millions of users, a well-prepared security staff should be able to press one button to isolate core databases or infected machines, and even segment networks across internal firewalls to stop the infection immediately. New approaches are cognizant of the need to automatically mitigate and contain threats in real-time, affecting and updating hundreds of enforcement devices if and when necessary.

Automated Detection Needs Automated Response

Although some new approaches and technologies can be applied to identifying advanced attacks, there are still gaping holes in the response phase. For example, many security teams still manually research a detected threat, evaluate the impact, determine who or what systems were involved, and establish the type and significance of the threat. This manual investigation is still a painstaking process that must be completed to characterize the threat and possible responses before the response team can mitigate or contain the problem.Depending on the size of the organization, this manual process occurs hundreds – if not thousands – of times per week. Many IT security teams have been at a breaking point, causing significant delays in response times and raising the risk that critical threats will not be addressed.

Recent technology advances from companies like FireEye have dramatically improved the detection of malware, and next-generation intrusion prevention (IPS) and intrusion detection (IDS) systems also help detect database attacks. One side-effect of automatic detection tools is the creation of a whole new problem – how to respond to all these new alerts?

A better and more secure approach would marry automatic detection with automatic response technologies. For robust protection, security professionals should deploy automated tools to detect, investigate, mitigate, and contain the new generation of threats affecting database security.

Context Matters

If an advanced malware detection tool or next-gen IDS reported a threat, would your incident response team automatically know which database or servers were targeted? A more effective approach would connect-the-dots between threats, targeted databases and users, adding insight and initiating automatic responses to verified threats. Automatic investigation tools could detect access to the database network segment or server, and then automatically fire off containment updates to block access by infected systems to those servers and networks, or initiate mitigation protocols that slow traffic access, elevate logging, trigger additional packet capture, and more.Likewise, new technologies in this approach would be able to use attack data to vet the source and destination of suspected traffic while understanding the severity and urgency of the detected threat, as well as the likelihood of a false positive. In a best-case scenario, the new approach would generate reports that security teams can review before confirming whether a threat is critical or a false-positive, and then enable mitigation or containment – network wide – with the push of a button.

Enterprises need to substantially reduce the time and effort required to contextualize detected threats, and quickly contain modern malware and targeted attacks. This is a vital requirement to a novel approach; however, some ‘new’ technologies are extremely rules heavy or require custom coding, turning the hope of automated incident response into a long, drawn-out study in software development, testing, and maintenance – which further increase risk instead of decreasing it.

Where Do We Go from Here?

As attacks on databases and networks become more advanced, so do the requirements for rapid response. It is likely we’ll see attackers continuing to up their game. Organizations that are able to invest more in best practices as well as automated detection investigation, mitigation, and containment will be less likely to experience a damaging attack and can minimize the damage when one does occur.The quest for the better threat management mouse trap is in full swing.

HeartBleed 101

The major security flaw known as Heartbleed, which may affect nearly two-thirds of websites online, threatens to expose masses of usernames, passwords and other sensitive information worldwide. And, predict experts, the ramifications will be with us for years.

In fact, it may have already

done so, considering that it amounts to a nuclear option for web

hackers. Information security vendors are coming out en masse with tips

and tools in the wake of the news to help consumers battle the fallout.

As McAfee pointed out in a blog, Heartbleed is not a virus, but rather a mistake written into OpenSSL—a security standard encrypting communications between users and the servers provided by a majority of online services. The mistake makes it viable for hackers to extract data from massive databases containing user names, passwords, private data and so on.

The HeartBleed flaw queers the deal by getting in the middle of that communication to send a malicious heartbeat signal to servers. The server is then tricked into sending back a random chunk of its memory to the user who sent the malicious heartbeat, which will likely have email addresses, user names and passwords—everything someone needs to either access accounts and/or mount phishing expeditions via spam. In some cases, the information returned could be the keys to the server itself, which would thus compromise whole swathes of the internet.

“The severity of this vulnerability cannot be overstated”, McAfee concluded.

But it won’t be that easy, said ThetaRay, in a blog. “The immediate thought on everyone’s mind is that when there is a bug, there is a patch, and the first thing to do is apply it to stop the bleeding”, it said. “Although this may appear to be a solution and a way of allaying the panic, applying patches to the many vulnerable platforms can take at least six to twelve months. Months will pass before vulnerable vendors, and all levels of end users, return to safe OpenSSL-dependent activity.”

In other words, the gloomy forecast is that Heartbleed will live on, well after patches are issued and applied.

“The bug is so far-reaching into internal networks, server communications, and products that were already shipped out to end users that it will take a very long time until it is completely fixed”, said ThetaRay. “While this process takes its course, the even more troubling thought on everybody’s mind is how malicious actors are planning to exploit this flaw and cause maximum damage while they still have the opportunity.”

As a result, HeartBleed opens the door for another discussion on the efficacy of passwords for data protection in the first place.

“My advice? Scrap passwords altogether”, said Chris Russell, CTO at Swivel Secure, in an emailed statement to Infosecurity. “The inconvenient truth is that web users are neither capable nor are they willing to maintain the complex, rolling system of passwords that today’s web environment demands. Passwords have proven over and over again that they are no longer fit to secure the increasing amount of personal data we now store online and in the cloud.”

Some say the problem is more with password management and resetting practices—and that two-factor authentication can solve many issues. "Passwords are not an invalid 'something-you-know,' but the way the industry uses passwords is flawed", said Peter Tapling, president and CEO of Authentify. "Requiring a longer, more difficult password string makes for a poor user experience. Further, many password reset functions use an email link to reset the password. If a fraudster has all of your bank account login information, they likely have your email login as well, making email ineffective as a second authentication channel. The barriers to hijacking an account protected only by a password are just too low.”

“The first thing you need to do is check to make sure your online services, like Yahoo and PayPal, have updated their servers in order to compensate for the Heartbleed vulnerability”, counseled McAfee, which has also released a Heartbleed Checker tool to help consumers easily gauge their susceptibility to the potentially dangerous effects of the Heartbleed bug.

And, very importantly, “do not change your passwords until you’ve done this”, the anti-virus firm stressed. “A lot of outlets are reporting that you need to do this as soon as possible, but the problem is that Heartbleed primarily affects the server end of communications, meaning if the server hasn’t been updated with Heartbleed in mind, then changing your password will not have the desired outcome.”

Obviously, online services affected by the flaw should be sending emails alerting users that they have updated their servers. “But beware: this is a prime time for phishing attacks—attacks which impersonate services in order to steal your credentials—so be extra careful when viewing these messages”, McAfee warned.

As McAfee pointed out in a blog, Heartbleed is not a virus, but rather a mistake written into OpenSSL—a security standard encrypting communications between users and the servers provided by a majority of online services. The mistake makes it viable for hackers to extract data from massive databases containing user names, passwords, private data and so on.

The Heartbleed Lowdown

For SSL to work to secure communications between a user and a website, the computer needs to establish a link with the web server. The end machine will send out a “heartbeat”, which is a ping to make sure a server is online. If it is, it sends a heartbeat back and a secure connection is created.The HeartBleed flaw queers the deal by getting in the middle of that communication to send a malicious heartbeat signal to servers. The server is then tricked into sending back a random chunk of its memory to the user who sent the malicious heartbeat, which will likely have email addresses, user names and passwords—everything someone needs to either access accounts and/or mount phishing expeditions via spam. In some cases, the information returned could be the keys to the server itself, which would thus compromise whole swathes of the internet.

“The severity of this vulnerability cannot be overstated”, McAfee concluded.

Heartbleed Ramifications

Some firms are advising users to assume that the worst has already been done, considering that researchers think that it has gone undetected for at least two years. So, companies should be preparing teams to move to detection and post-breach response plans.But it won’t be that easy, said ThetaRay, in a blog. “The immediate thought on everyone’s mind is that when there is a bug, there is a patch, and the first thing to do is apply it to stop the bleeding”, it said. “Although this may appear to be a solution and a way of allaying the panic, applying patches to the many vulnerable platforms can take at least six to twelve months. Months will pass before vulnerable vendors, and all levels of end users, return to safe OpenSSL-dependent activity.”

In other words, the gloomy forecast is that Heartbleed will live on, well after patches are issued and applied.

“The bug is so far-reaching into internal networks, server communications, and products that were already shipped out to end users that it will take a very long time until it is completely fixed”, said ThetaRay. “While this process takes its course, the even more troubling thought on everybody’s mind is how malicious actors are planning to exploit this flaw and cause maximum damage while they still have the opportunity.”

Protecing from Heartbleed

To stay fully protected, users should wait for the flaw to be patched, and apply a new, unique, long password for each site visited, and plan to switch them out for other new, unique, long passwords on a monthly basis. Which is, of course, much easier said than done .As a result, HeartBleed opens the door for another discussion on the efficacy of passwords for data protection in the first place.

“My advice? Scrap passwords altogether”, said Chris Russell, CTO at Swivel Secure, in an emailed statement to Infosecurity. “The inconvenient truth is that web users are neither capable nor are they willing to maintain the complex, rolling system of passwords that today’s web environment demands. Passwords have proven over and over again that they are no longer fit to secure the increasing amount of personal data we now store online and in the cloud.”

Some say the problem is more with password management and resetting practices—and that two-factor authentication can solve many issues. "Passwords are not an invalid 'something-you-know,' but the way the industry uses passwords is flawed", said Peter Tapling, president and CEO of Authentify. "Requiring a longer, more difficult password string makes for a poor user experience. Further, many password reset functions use an email link to reset the password. If a fraudster has all of your bank account login information, they likely have your email login as well, making email ineffective as a second authentication channel. The barriers to hijacking an account protected only by a password are just too low.”

Time to Take Action

The discussion about better account protection and the security of the internet as a whole will rage on for the foreseeable future over HeartBleed, but for now, users do need to take action.“The first thing you need to do is check to make sure your online services, like Yahoo and PayPal, have updated their servers in order to compensate for the Heartbleed vulnerability”, counseled McAfee, which has also released a Heartbleed Checker tool to help consumers easily gauge their susceptibility to the potentially dangerous effects of the Heartbleed bug.

And, very importantly, “do not change your passwords until you’ve done this”, the anti-virus firm stressed. “A lot of outlets are reporting that you need to do this as soon as possible, but the problem is that Heartbleed primarily affects the server end of communications, meaning if the server hasn’t been updated with Heartbleed in mind, then changing your password will not have the desired outcome.”

Obviously, online services affected by the flaw should be sending emails alerting users that they have updated their servers. “But beware: this is a prime time for phishing attacks—attacks which impersonate services in order to steal your credentials—so be extra careful when viewing these messages”, McAfee warned.

Dan Geer on Heartbleed and Software Monocultures

Good essay:To repeat, Heartbleed is a common mode failure. We would not know about it were it not open source (Good). That it is open source has been shown to be no talisman against error (Sad). Because errors are statistical while exploitation is not, either errors must be stamped out (which can only result in dampening the rate of innovation and rewarding corporate bigness) or that which is relied upon must be field upgradable (Real Politik). If the device is field upgradable, then it pays to regularly exercise that upgradability both to keep in fighting trim and to make the opponent suffer from the rapidity with which you change his target.The whole thing is worth reading.

Info on Russian Bulk Surveillance

Good information:Russian law gives Russia’s security service, the FSB, the authority to use SORM (“System for Operative Investigative Activities”) to collect, analyze and store all data that transmitted or received on Russian networks, including calls, email, website visits and credit card transactions. SORM has been in use since 1990 and collects both metadata and content. SORM-1 collects mobile and landline telephone calls. SORM-2 collects internet traffic. SORM-3 collects from all media (including Wi-Fi and social networks) and stores data for three years. Russian law requires all internet service providers to install an FSB monitoring device (called “Punkt Upravlenia”) on their networks that allows the direct collection of traffic without the knowledge or cooperation of the service provider. The providers must pay for the device and the cost of installation.

Collection requires a court order, but these are secret and not shown to the service provider. According to the data published by Russia’s Supreme Court, almost 540,000 intercepts of phone and internet traffic were authorized in 2012. While the FSB is the principle agency responsible for communications surveillance, seven other Russian security agencies can have access to SORM data on demand. SORM is routinely used against political opponents and human rights activists to monitor them and to collect information to use against them in “dirty tricks” campaigns. Russian courts have upheld the FSB’s authority to surveil political opponents even if they have committed no crime. Russia used SORM during the Olympics to monitor athletes, coaches, journalists, spectators, and the Olympic Committee, publicly explaining this was necessary to protect against terrorism. The system was an improved version of SORM that can combine video surveillance with communications intercepts.

Monday, April 21, 2014

Getting Started with Docker

What is Docker?

Docker is a Container.While a Virtual Machine is a whole other guest computer running on top of your host computer (sitting on top of a layer of virtualization), Docker is an isolated portion of the host computer, sharing the host kernel (OS) and even its bin/libraries if appropriate.

To put it in an over-simplified way, if I run a CoreOS host server and have a guest Docker Container based off of Ubuntu, the Docker Container contains the parts that make Ubuntu different from CoreOS.

This is one of my favorite images which describes the difference:

This image is found on these slides provided by Docker.

Getting Docker

Docker isn't compatible with Macintosh's kernel unless you install boot2docker. I avoid that and use CoreOS in Vagrant, which comes with Docker installed.I highly recommend CoreOS as a host machine for your play-time with Docker. They are building a lot of awesome tooling around Docker.My

Vagrantfile for CoreOS is as follows:config.vm.box = "coreos"

config.vm.box_url = "http://storage.core-os.net/coreos/amd64-generic/dev-channel/coreos_production_vagrant.box"

config.vm.network "private_network",

ip: "172.12.8.150"# This will require sudo access when using "vagrant up"

config.vm.synced_folder ".", "/home/core/share",

id: "core",

:nfs => true,

:mount_options => ['nolock,vers=3,udp']config.vm.provider :vmware_fusion do |vb, override|

override.vm.box_url = "http://storage.core-os.net/coreos/amd64-generic/dev-channel/coreos_production_vagrant_vmware_fusion.box"

end # plugin conflict

if Vagrant.has_plugin?("vagrant-vbguest") then

config.vbguest.auto_update = false

end If you are using CoreOS, dont be dismayed when it tries to restart on you. It's a "feature", done during auto-updates. You may, however, need to runvagrant reloadto restart the server so Vagrant set up file sync and networking again.

Your First Container

This is the unfortunate baby-step which everyone needs to take to first get their feet wet with Docker. This won't show what makes Docker powerful, but it does illustrate some important points.Docker has a concept of "base containers", which you use to build off of. After you make changes to a base container, you can save those change and commit them. You can even push your boxes up to index.docker.io.

One of Docker's most basic images is just called "Ubuntu". Let's run an operation on it.

If the image is not already downloaded in your system, it will download it first from the "Ubuntu repository". Note the use of similar terminology to version control systems such as Git.Run Bash:

docker run ubuntu /bin/bashdocker ps (similar to our familiar Linux ps) - you'll see no containers listed, as none are currently running. Run docker ps -a, however, and you'll see an entry!CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

8ea31697f021 ubuntu:12.04 /bin/bash About a minute ago Exit 0 loving_pare/bin/bash, but there wasn't any running process to keep it alive.A Docker container only stays alive as long as there is an active process being run in it.

Keep that in mind for later. Let's see how we can run Bash and poke around the Container. This time run:

docker run -t -i ubuntu /bin/bash What's that command doing?

docker run- Run a container-t- Allocate a (pseudo) tty-i- Keep stdin open (so we can interact with it)ubuntu- use the Ubuntu base image/bin/bash- Run the bash shell

Tracking Changes

Exit out of that shell (ctrl+d or type exit) and run docker ps -a again. You'll see some more output similar to before:CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

30557c9017ec ubuntu:12.04 /bin/bash About a minute ago Exit 127 elegant_pike

8ea31697f021 ubuntu:12.04 /bin/bash 22 minutes ago Exit 0 loving_pare30557c9017ec in my case). Use that ID and run docker diff <container id>. For me, I see:core@localhost ~ $ docker diff 30557c9017ec

A /.bash_history

C /dev

A /dev/kmsg.bash_history file, a /dev directory and a /dev/kmsg

file. Minor changes, but tracked changes never the less! Docker tracks

any changes we make to a container. In fact, Docker allows us make

changes to an image, commit those changes, and then push those changes

out somehwere. This is the basis of how we can deploy with Docker.Let's install some base items into this Ubuntu install and save it as our own base image.

# Get into Bash

docker run -t -i ubuntu /bin/bash

# Install some stuff

apt-get update

apt-get install -y git ack-grep vim curl wget tmux build-essential python-software-propertiesdocker ps -a again. Grab the latest container ID and run another diff (docker diff <Container ID>):core@localhost ~ $ docker diff 5d4bdae290a4

> A TON OF FILE LISTED HEREdocker commit <Container ID> <Name>:<Tag>core@localhost ~ $ docker commit 5d4bdae290a4 fideloper/docker-example:0.1

c07e8dc7ab1b1fbdf2f58c7ff13007bc19aa1288add474ca358d0428bc19dba6 # You'll get a long hash as a Success messagedocker images:core@localhost ~ $ docker images

REPOSITORY TAG IMAGE ID CREATED VIRTUAL SIZE

fideloper/docker-example 0.1 c07e8dc7ab1b 22 seconds ago 455.1 MB

ubuntu 13.10 9f676bd305a4 6 weeks ago 178 MB

ubuntu saucy 9f676bd305a4 6 weeks ago 178 MB

ubuntu 13.04 eb601b8965b8 6 weeks ago 166.5 MB

ubuntu raring eb601b8965b8 6 weeks ago 166.5 MB

ubuntu 12.10 5ac751e8d623 6 weeks ago 161 MB

ubuntu quantal 5ac751e8d623 6 weeks ago 161 MB

ubuntu 10.04 9cc9ea5ea540 6 weeks ago 180.8 MB

ubuntu lucid 9cc9ea5ea540 6 weeks ago 180.8 MB

ubuntu 12.04 9cd978db300e 6 weeks ago 204.4 MB

ubuntu latest 9cd978db300e 6 weeks ago 204.4 MB

ubuntu precise 9cd978db300e 6 weeks ago 204.4 MBMore excitingly, however, is that we also have our own image

fideloper/docker-example and its tag 0.1!Building a Server with Dockerfile

Let's move onto building a static web server with a Dockerfile. The Dockerfile provides a set of instructions for Docker to run on a container. This lets us automate installing items - we could have used a Dockerfile to install git, curl, wget and everything else we installed above.Create a new directory and

cd into it. Because we're installing Nginx, let's create a default configuration file that we'll use for it.Create a file called

default and add:server {

root /var/www;

index index.html index.htm;

# Make site accessible from http://localhost/

server_name localhost;

location / {

# First attempt to serve request as file, then

# as directory, then fall back to index.html

try_files $uri $uri/ /index.html;

}

}Next, create a file named

Dockerfile and add the following, changing the FROM section as suitable for whatever you named your image:FROM fideloper/docker-example:0.1

RUN echo "deb http://archive.ubuntu.com/ubuntu precise main universe" > /etc/apt/sources.list

RUN apt-get update

RUN apt-get -y install nginx

RUN echo "daemon off;" >> /etc/nginx/nginx.conf

RUN mkdir /etc/nginx/ssl

ADD default /etc/nginx/sites-available/default

EXPOSE 80

CMD ["nginx"]FROMwill tell Docker what image (and tag in this case) to base this off ofRUNwill run the given command (as user "root") usingsh -c "your-given-command"ADDwill copy a file from the host machine into the container- This is handy for configuration files or scripts to run, such as a process watcher like supervisord, systemd, upstart, forever (etc)

EXPOSEwill expose a port to the host machine. You can expose multiple ports like so:EXPOSE 80 443 8888CMDwill run a command (not usingsh -c). This is usually your long-running process. In this case, we're simply starting Nginx.- In production, we'd want something watching the nginx process in case it fails

docker build -t nginx-example .Successfully built 88ff0cf87aba (your new container ID will be different).Check out what you have now, run

docker images:core@localhost ~/webapp $ docker images

REPOSITORY TAG IMAGE ID CREATED VIRTUAL SIZE

nginx-example latest 88ff0cf87aba 35 seconds ago 468.5 MB

fideloper/docker-example 0.1 c07e8dc7ab1b 29 minutes ago 455.1 MB

...other Ubuntu images below ...docker ps -a:core@localhost ~/webapp $ docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

de48fa2b142b 8dc0de13d8be /bin/sh -c #(nop) CM About a minute ago Exit 0 cranky_turing

84c5b21feefc 2eb367d9069c /bin/sh -c #(nop) EX About a minute ago Exit 0 boring_babbage

3d3ed53987ec 77ca921f5eef /bin/sh -c #(nop) AD About a minute ago Exit 0 sleepy_brattain

b281b7bf017f cccba2355de7 /bin/sh -c mkdir /et About a minute ago Exit 0 high_heisenberg

56a84c7687e9 fideloper/docker-e... /bin/sh -c #(nop) MA 4 minutes ago Exit 0 backstabbing_turing

... other images ...Finally, run the web server

Let's run this web server! Usedocker run -p 80:80 -d nginx-example (assuming you also named yours "nginx-example" when building it).The-p 80:80binds the Container's port 80 to the guest machines, so if wecurl localhostor go to the server's IP address in our browser, we'll see the results of Nginx processing requests on port 80 in the container.

core@localhost ~/webapp $ docker run -d nginx-example

73750fc2a49f3b7aa7c16c0623703d00463aa67ba22d2108df6f2d37276214cc # Success!

core@localhost ~/webapp $ docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

a085a33093f4 nginx-example:latest nginx 2 seconds ago Up 2 seconds 80/tcp determined_bardeendocker ps instead of docker ps -a - We're seeing a currently active and running Docker container. Let's curl localhost:core@localhost ~/webapp $ curl localhost/index.htmld

<html>

<head><title>500 Internal Server Error</title></head>

<body bgcolor="white">

<center><h1>500 Internal Server Error</h1></center>

<hr><center>nginx/1.1.19</center>

</body>

</html>index.html file for Nginx to fall back onto. Let's stop this docker instance via docker stop <container id>:core@localhost ~/webapp $ docker stop a085a33093f4

a085a33093f4index.html page from where we'll share it.# I'm going to be sharing the /home/core/share directory on my CoreOS machine

echo "Hello, Docker fans!" >> /home/core/share/index.htmldocker run -v /home/core/share:/var/www:rw -p 80:80 -d nginx-exampledocker run- Run a container-v /path/to/host/dir:/path/to/container/dir:rw- The volumes to share. Noterwis read-write. We can also definerofor "read-only".-p 80:80- Bind the host's port 80 to the containers.-d nginx-exampleRun our imagenginx-example, which has the "CMD" setup to runnginx

curl localhost:core@localhost ~/webapp $ curl localhost

Hello, Docker fans!

Note that the IP address I used is that of my CoreOS server. I set the IP address in my

Vagrantfile. I don't need to know the IP address given to my Container in such a simple example, altho I can find it by running docker inspect <Container ID>.core@localhost ~/webapp $ docker inspect a0b531aa00f4

[{

"ID": "a0b531aa00f475b0025d8edce09961077eedd82a190f2e2f862592375cad4dd5",

"Created": "2014-03-20T22:38:22.452820503Z",

... a lot of JSON ...

"NetworkSettings": {

"IPAddress": "172.17.0.2",

"IPPrefixLen": 16,

"Gateway": "172.17.42.1",

"Bridge": "docker0",

"PortMapping": null,

"Ports": {

"80/tcp": [

{

"HostIp": "0.0.0.0",

"HostPort": "80"

}

]

}

},

... more JSON ...

}]Linking Containers

Not fully covered here (for now) is the ability to link two or more containers together. This is handy if containers need to communicate with eachother. For example, if your application container needs to communiate with a database container. Linking lets you have some infrastrcture be separate from your application.For example:

Start a container and name it something useful (in this case,

mysql, via the -name parameter):docker run -p 3306:3306 -name mysql -d some-mysql-image-d name:db (where db is an arbitrary name used in the container's environment variables):docker run -p 80:80 -link mysql:db -d some-application-imagesome-application-image will have environment variables available such as DB_PORT_3306_TCP_ADDR=172.17.0.8 and DB_PORT_3306_TCP_PORT=3306 which you application can use to know the database location.Here's an example of a MySQL Dockerfile

The Epic Conclusion

So, we fairly easily can build servers, add in our application code, and then ship our working applications off to a server. Everything in the environment is under your control.In this way, we can actually ship the entire environment instead of just code.

P.S. - Tips and Tricks

Cleaning Up

If you're like me and make tons of mistakes and don't want the record of all your broken images and containers lying around, you can clean them them:- To remove a container:

docker rm <Container ID> - To remove all containers:

docker rm $(docker ps -a -q) - To remove images:

docker rmi <Container ID> - To remove all images:

docker rmi $(docker ps -a -q)

Note: You must remove all containers using an image before deleting the image

Base Images

I always use Phusian's Ubuntu base image. It installs/enables a lot of items you may not think of, such as the CRON daemon, logrotate, ssh-server (you want to be able to ssh into your server, right?) and other important items. Read the Readme file of that project to learn more.Especially note Phusion's use of an "insecure" SSH key to get you started with the ability to SSH into your container and play around, while it runs another process such as Nginx.Orchard, creators of Fig, also have a ton of great images to use to learn from. Also, Fig looks like a really nice way to handle Docker images for development environments.

Sunday, April 20, 2014

Overreacting to Risk

This is a crazy overreaction:A 19-year-old man was caught on camera urinating in a reservoir that holds Portland's drinking water Wednesday, according to city officials.I understand the natural human disgust reaction, but do these people actually think that their normal drinking water is any more pure? That a single human is that much worse than all the normal birds and other animals? A few ounces distributed amongst 38 million gallons is negligible.

Now the city must drain 38 million gallons of water from Reservoir 5 at Mount Tabor Park in southeast Portland.

Another story.

Metaphors of Surveillance

There's a new study looking at the metaphors we use to describe surveillance.Over 62 days between December and February, we combed through 133 articles by 105 different authors and over 60 news outlets. We found that 91 percent of the articles contained metaphors about surveillance. There is rich thematic diversity in the types of metaphors that are used, but there is also a failure of imagination in using literature to describe surveillance.The only literature metaphor used is the book 1984.

Over 9 percent of the articles in our study contained metaphors related to the act of collection; 8 percent to literature (more on that later); about 6 percent to nautical themes; and more than 3 percent to authoritarian regimes.

On the one hand, journalists and bloggers have been extremely creative in attempting to describe government surveillance, for example, by using a variety of metaphors related to the act of collection: sweep, harvest, gather, scoop, glean, pluck, trap. These also include nautical metaphors, such as trawling, tentacles, harbor, net, and inundation. These metaphors seem to fit with data and information flows.

Subscribe to:

Posts (Atom)