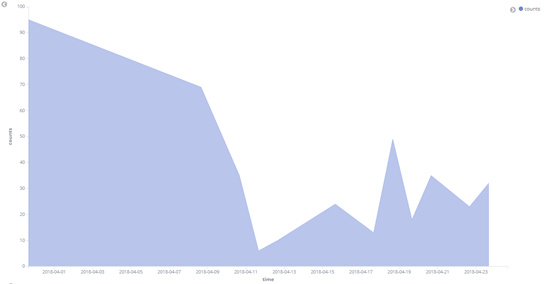

Mozilla has formally launched Firefox Monitor, a privacy-engineered website that hooks up to Troy Hunt’s Have I Been Pwned? (HIBP) breach notification database

The site – which despite the Firefox tag is open to anyone – can be used either to check an email address against known breaches, or to register for breach notification should that address be detected in future breaches logged by HIBP.

Both of these things can already be done from the main HIBP website, question behind is : What does Firefox Monitor do that HIBP doesn’t?

There are several answers. The first of which is that connecting HIPB to a site run by and branded under the Firefox name will promote breach checking and notification to a larger audience.

Expanding the numbers signing up for notification from the hundreds of thousands to the millions would be a major advance for breach detection not least because HIPB has a record of detecting breaches before some breached companies do. (The Disqus breach earlier 2012.)

The earlier a user hears that their email address is part of a breached cache of data, the sooner they can change their password. Until HIBP, that might have been years after the address entered the public domain – or perhaps never.

A second reason has to do with Mozilla’s interest in integrating breach notification into the Firefox browser itself, a logical next step which has already been completed by password management tool, 1Password.

It’s not clear what progress Mozilla is making towards this although a dev involved with the project, Matt Grimes, made the following comment in his overview of Firefox Monitor’s origins:

A hidden technical challenge services like HIPB and its partners face is how internet users can enter searches (for breached email addresses or even specific passwords, which HIPB can also check), as unsalted hashes without this becoming a secondary privacy risk.

In theory, the search could be entered as a salted hash but that would greatly increase the computational demands when coping with large numbers of queries.

The answer proposed by the company that hosts the Firefox Monitor service on Mozilla’s behalf, Cloudflare, is a mathematical idea called k-anonymity. The company offers a full description of how this works, but the essence is as follows:

The website generates a local hash of the given email address using SHA-1 but sends only the first six characters to HIPB via its API. This returns a list of hashes containing the queried string (around 477 on average), each of which is compared to the full local hash. If a match is found, that email address or password is in the database and has been breached.

Can this be leveraged with your web/ mobile applications to ensure that users data is in breach database?

yes even github and other products started utilizing this to notify users and password change happens then by the users.

The site – which despite the Firefox tag is open to anyone – can be used either to check an email address against known breaches, or to register for breach notification should that address be detected in future breaches logged by HIBP.

Both of these things can already be done from the main HIBP website, question behind is : What does Firefox Monitor do that HIBP doesn’t?

There are several answers. The first of which is that connecting HIPB to a site run by and branded under the Firefox name will promote breach checking and notification to a larger audience.

Expanding the numbers signing up for notification from the hundreds of thousands to the millions would be a major advance for breach detection not least because HIPB has a record of detecting breaches before some breached companies do. (The Disqus breach earlier 2012.)

The earlier a user hears that their email address is part of a breached cache of data, the sooner they can change their password. Until HIBP, that might have been years after the address entered the public domain – or perhaps never.

A second reason has to do with Mozilla’s interest in integrating breach notification into the Firefox browser itself, a logical next step which has already been completed by password management tool, 1Password.

It’s not clear what progress Mozilla is making towards this although a dev involved with the project, Matt Grimes, made the following comment in his overview of Firefox Monitor’s origins:

A hidden technical challenge services like HIPB and its partners face is how internet users can enter searches (for breached email addresses or even specific passwords, which HIPB can also check), as unsalted hashes without this becoming a secondary privacy risk.

In theory, the search could be entered as a salted hash but that would greatly increase the computational demands when coping with large numbers of queries.

The answer proposed by the company that hosts the Firefox Monitor service on Mozilla’s behalf, Cloudflare, is a mathematical idea called k-anonymity. The company offers a full description of how this works, but the essence is as follows:

The website generates a local hash of the given email address using SHA-1 but sends only the first six characters to HIPB via its API. This returns a list of hashes containing the queried string (around 477 on average), each of which is compared to the full local hash. If a match is found, that email address or password is in the database and has been breached.

Instead of seeking to salt hashes to the point at which they are unique, we instead introduce ambiguity into what the client is requesting.Both sides benefit – the user keeps the hash to themselves while HIPB doesn’t have to return more hashes than it needs to. For extra security against cyberattackers, Firefox Monitor doesn’t store anything in its database and caches the user’s results in an encrypted session.

Can this be leveraged with your web/ mobile applications to ensure that users data is in breach database?

yes even github and other products started utilizing this to notify users and password change happens then by the users.